TL;DR: After deploying agents across dozens of SMBs, one question predicts success better than anything else: "Can you describe, step by step, what a human does to complete this task today?" If the answer is clear and specific, the task is automatable. If it's vague, you're not ready. The question works because it simultaneously tests whether something is a real process, whether it's repeatable, and where human judgment actually enters. Most AI failures aren't technology problems. They're targeting problems. Businesses automate the wrong task, automate a broken process, or automate something that doesn't save enough to justify the cost. The question catches all three before you spend a pound.

The Pattern Nobody Talks About

After building agents for 47 SMBs across accounting, legal, recruitment, healthcare, and a dozen other industries, we stopped being surprised by which projects succeeded and which ones didn't.

It wasn't budget. Some of the most expensive projects failed. Some of the cheapest ones produced extraordinary returns.

It wasn't technical sophistication. Firms running on spreadsheets and email sometimes got better results than firms with modern tech stacks.

It wasn't team size, industry, or how "ready" the business claimed to be.

One thing predicted it. Every time. A single question we now ask before we build anything:

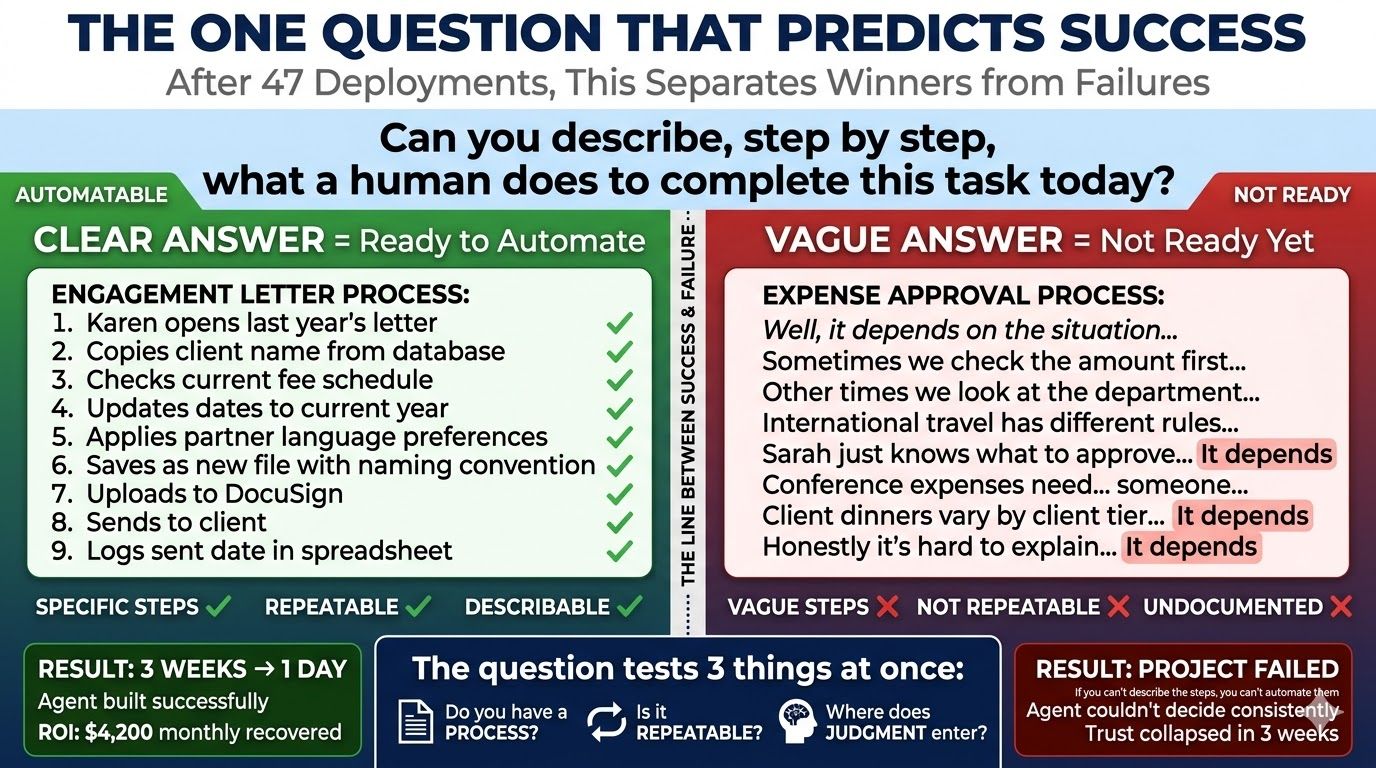

"Can you describe, step by step, what a human does to complete this task today?"

That's it. The entire diagnostic in one sentence.

When the answer is clear and specific, something like "Karen opens last year's engagement letter, copies the client name, checks the current fee schedule, updates the dates, applies the partner's language preferences, saves as a new file, uploads to DocuSign, and sends," the task is automatable. We can see the steps. We can encode them. We can build something that does them faster and more consistently than a person copying and pasting in Word.

When the answer is vague, something like "Well, it depends on the situation, sometimes we do this, other times we do that, and honestly it varies by who's handling it," you're not ready. Not because the technology can't handle complexity. Because you don't have a process. You have a habit. And habits can't be automated.

Why This One Question Works

The question tests three things at once, which is why it's so reliable.

It tests whether you actually have a process. If you can describe the steps, you have a process. If you can't, you have tribal knowledge living in someone's head. You can't automate what you can't describe.

The Edinburgh physio clinic is a good example. When we first asked Fiona to describe the referral tracking process, she paused. "I check the emails when I can. I enter them into the spreadsheet. I try to book people in." That's not a process. That's "Fiona does it when she has time." The first step wasn't building an agent. It was documenting what Fiona actually did, step by step, so there was something concrete to automate.

It tests whether the task is repeatable. If the steps are essentially the same every time, with minor variations, an agent can handle it. If every instance is genuinely unique, it needs a human.

The Nashville law firm's conflicts check: the search process is the same every time. Query the database, run fuzzy matching, traverse corporate hierarchies, cross-reference opposing parties. Repeatable. An agent does it in 8 minutes. But the legal judgment about what to do with a flagged conflict, whether it's waivable, whether an ethical wall suffices, whether the firm should decline, is different every time. That stays human.

It reveals where human judgment actually matters. This is the crucial one. Most tasks aren't purely mechanical or purely judgmental. They're 80% process and 20% judgment. The question shows you where the line is.

The Portland accounting firm's engagement letters: generating the letter from client data, selecting the template, populating fields, checking against last year's version? That's process. Deciding whether a specific long-tenure client needs a custom note from their partner? That's judgment. When you can see the line, you automate up to it and stop. The agent handles the 80%. The human handles the 20%. Both do what they're best at.

Three Ways Businesses Get This Wrong

The failures we've seen cluster into three patterns. The question catches all of them, but only if you're honest with the answers.

Failure 1: Automating the wrong task.

The business picks a task because it's annoying, not because it's describable. "We hate expense reports." So they try to automate expense approvals. But their expense policy has 47 exceptions that nobody's documented. International travel has different rules from domestic. Client dinners have different limits depending on the client tier. Conference expenses need pre-approval from a different person depending on the department.

The agent starts making decisions based on incomplete rules. It approves things it shouldn't. Rejects things it should approve. Trust collapses within weeks. Project abandoned. "AI doesn't work for us."

The question would've caught it. "Can you describe the approval process step by step?" "Well, it depends on the department, the amount, who's travelling, whether it's a client dinner, and honestly Sarah just knows what to approve." Not ready. Document the policy first. Then automate it.

Failure 2: Automating a broken process.

The process exists. You can describe the steps. But the steps are already failing when humans follow them. Automating a broken process doesn't fix it. It creates automated errors at machine speed.

If the Portland accounting firm had automated their copy-paste engagement letter method without fixing the underlying problem, they'd have automated the fee errors too. Four hundred and twelve letters, generated in an hour instead of three weeks, with the same 5.6% error rate. Twenty-three wrong letters, just delivered faster. The quality check stage exists in the agent specifically because the old process didn't have one.

The question catches this too. When describing the steps reveals contradictions, workarounds, or the phrase "and then someone usually catches it," the process needs repair before it needs automation.

Failure 3: Automating something that doesn't matter enough.

The task is describable. It's repeatable. The steps are clear. But saving time on it doesn't move the needle.

A process that takes 20 minutes per week costs about 17 hours per year. At $50 per hour, that's $850 of annual labour. If the agent costs $150 per month, you're spending $1,800 to save $850. You've lost money.

The question alone doesn't catch this one. You need the follow-up: "How many hours per week does this consume, and what's the hourly cost of the person doing it?" If the maths doesn't work at a 3x return or better, save your money.

How to Use the Question This Week

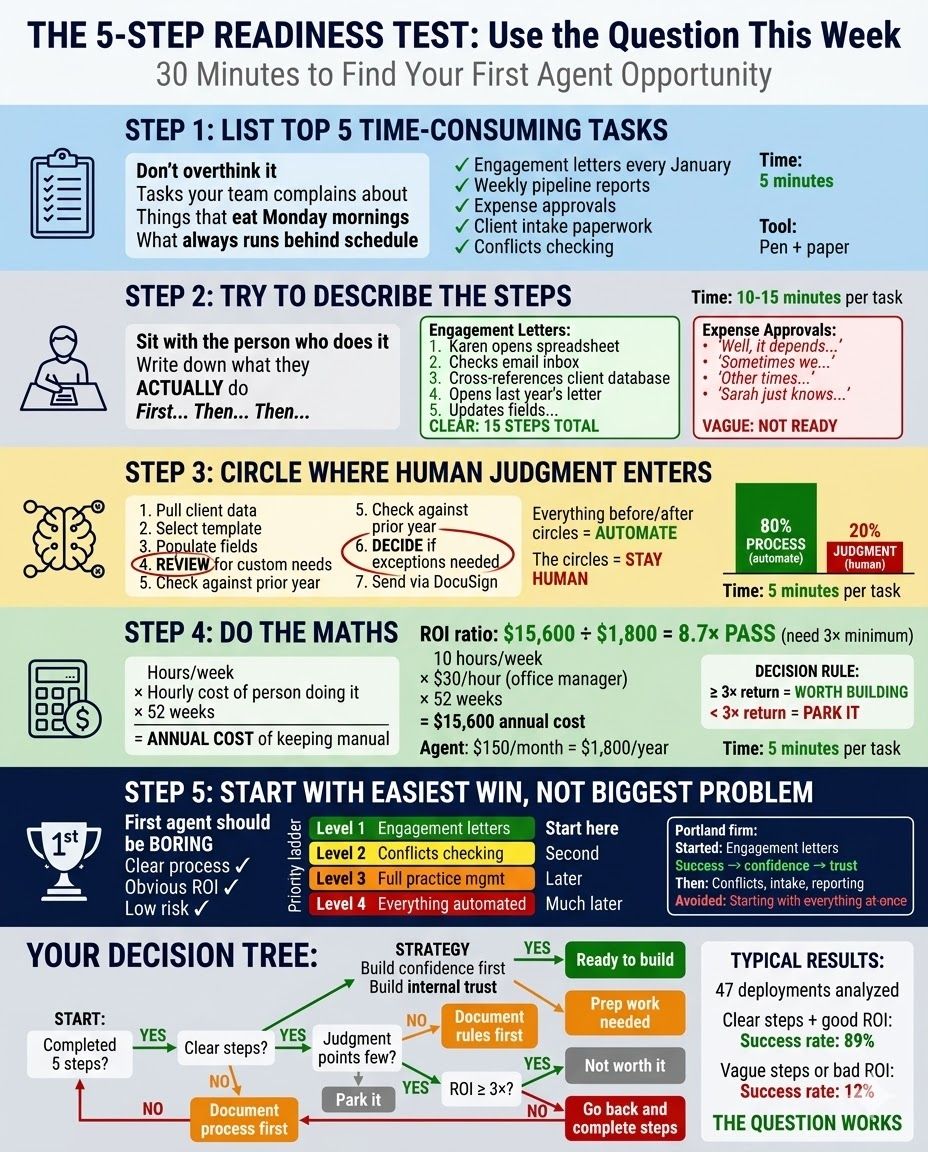

You don't need a consultant for this. You need a pen and 30 minutes.

Step 1: List your top five time-consuming repetitive tasks. Don't overthink it. The things your team complains about. The things that eat Monday mornings. The things that always run behind schedule. You probably know them already. Write them down.

Step 2: For each task, try to describe the steps. Sit with the person who does it. Write down what they actually do. "First, Karen opens the spreadsheet. Then she checks the email inbox. Then she cross-references against the client database." Five to fifteen clear steps means it's a candidate. If you write "it depends" more than twice, it's not ready yet.

Step 3: Mark where human judgment enters. In your step list, circle the moments where someone makes a decision that isn't rule-based. Everything before and after those circles is automatable. The circles stay human.

Step 4: Calculate the maths. Hours per week times the hourly cost of the person doing it times 52 weeks equals the annual cost of keeping it manual. If that number is three times or more than the estimated agent cost, it's worth building. If it's less, park it.

Step 5: Start with the easiest win, not the biggest problem. Your first agent should be boring. A clear process, obvious ROI, low risk. Build confidence. Build internal trust. Then tackle the complex ones. The firm that starts with engagement letter automation before attempting full practice management AI is the firm that succeeds.

The Question in Action

Quick look at how this played out across recent builds:

Engagement letters, Portland. "Can you describe the steps?" Yes. Fifteen clear steps. Human judgment only at the review stage. We automated everything before the review. Three weeks of Karen's January became one day. The question predicted it.

Conflicts check, Nashville. "Can you describe the steps?" Yes. Eight steps for the search process. But human judgment required at the decision stage. We automated the search, kept the human for the decision. Two to three hours per matter became 8 minutes. The question drew the line.

Candidate pipeline, Melbourne. "Can you describe the steps?" Partially. Each consultant tracked candidates differently. The process needed standardising before velocity tracking could work. We documented the stages first, then built the agent. The question revealed the gap.

Patient referrals, Edinburgh. "Can you describe the steps?" Fiona could, but she was the only one who knew them. Documenting her process was step one. Automating it was step two. The question exposed the single point of failure.

Four projects. Same question. Four different things revealed about readiness. All four succeeded because the question was asked before the build started, not after.

Before You Spend a Pound

The hype says AI can do anything. It can't. What it can do is follow a process faster, more consistently, and more cheaply than a person doing it manually. But only if the process is actually a process.

Before you invest in any AI tool, agent, or automation: pick one task. Sit down with the person who does it. Ask them to describe every step. Write it down. Find the judgment points. Calculate the cost.

If the steps are clear, the judgment points are few, and the cost justifies the build, you've found your first agent. If not, you've saved yourself from a project that was going to fail anyway. Either way, the question did its job.

Want to walk through this process with guidance? The AI Bottleneck Audit applies this framework to your specific business in 5 minutes. No pitch. Just the answer.

Want to see 31 real examples of businesses that asked the question? Download Unstuck. Every one started with the same question. Every one revealed something different about readiness.

by DD & SP, CEO - Connect on LinkedIn

for the AdAI Ed. Team