TL;DR: A digital marketing agency in Austin was sending 500 cold emails a week with a 2.2% reply rate. The prospects were right. The message was wrong. Three templates with swapped first names and company names. The BD lead knew the fix: research each prospect and write something specific. He'd done it manually for 20 dream clients and hit 35%. But he couldn't research 500 prospects a week. We built a five-stage agent that researches each prospect across their website, LinkedIn, job postings, and industry news, extracts 2-3 personalisation hooks, drafts messages using the BD lead's proven framework, and tracks which signals drive the highest reply rates. Reply rate climbed from 2.2% to 8.7%. Weekly conversations went from 5 to 25-plus. Three new clients closed in Q1. Agent cost: $340/month.

The Agency That Couldn't Market Itself

The irony wasn't lost on anyone in the office. A digital marketing agency, a company that charged clients $8,000 a month to generate leads, couldn't generate its own.

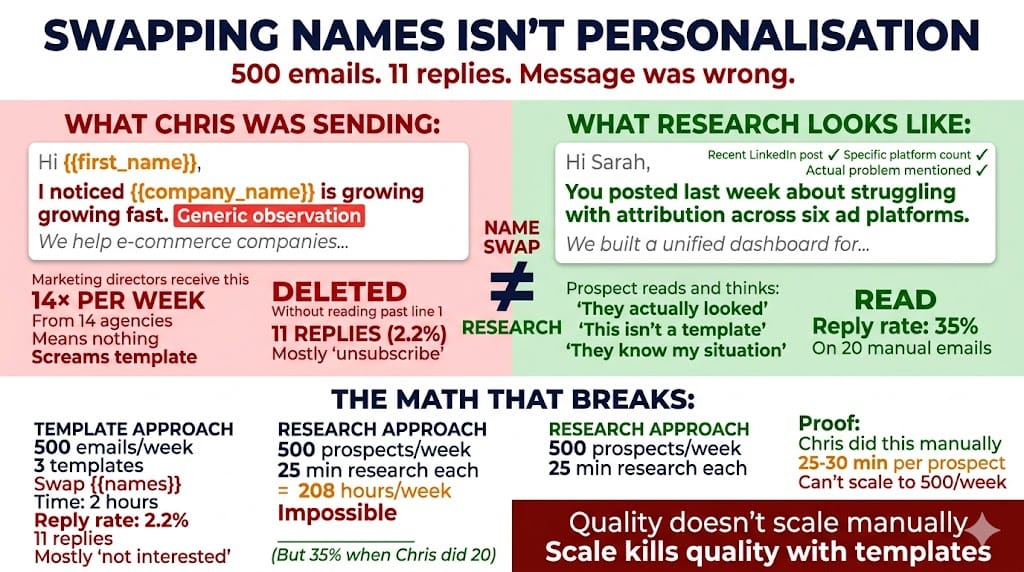

Not for lack of trying. Chris, the BD lead, was sending 500 cold emails per week. Open rates: 42%. Decent. Subject lines were fine. Deliverability was fine. Reply rates: 2.2%. Terrible. Eleven replies out of 500 emails. Roughly half of those were "not interested" or "unsubscribe."

The prospects were right. Marketing directors and CMOs at mid-market e-commerce companies doing $5M to $50M. Good ICP. Verified emails via Apollo. Clean list.

The problem was the message. Chris had three templates. Each one opened with a line like "I noticed {{company_name}} is growing fast." A line that every marketing director receives fourteen times a week from fourteen different agencies. It meant nothing. It screamed template. And it got treated the way templates get treated: deleted without reading past the first sentence.

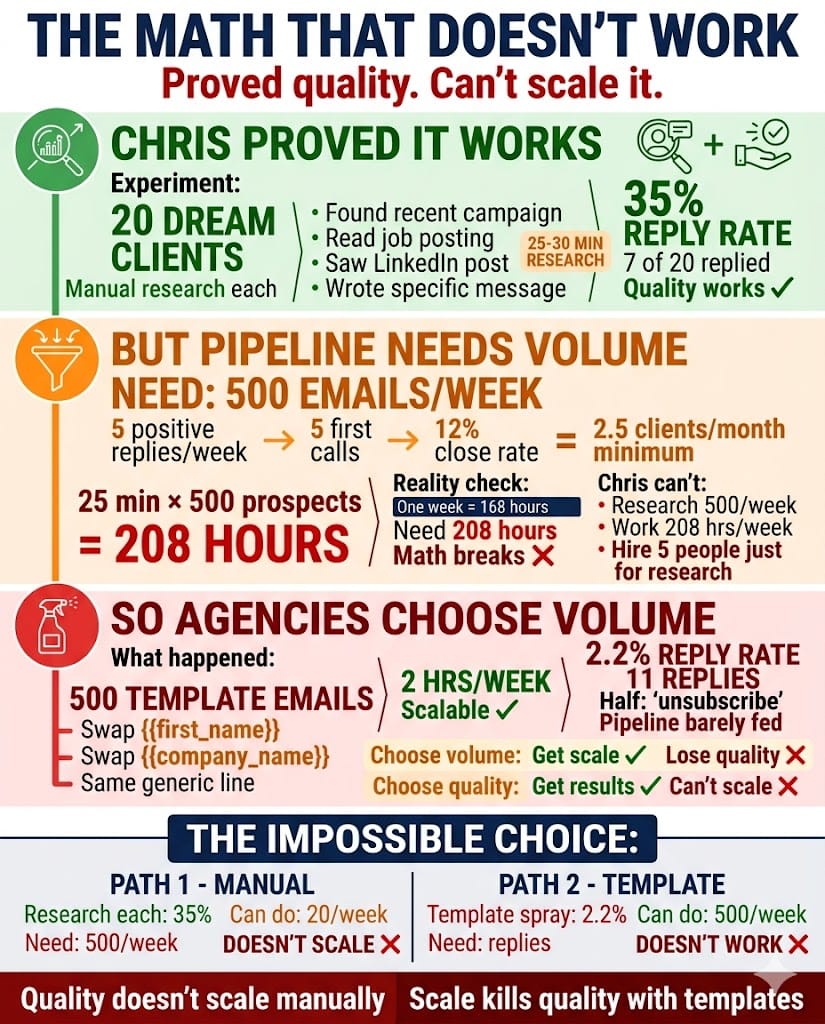

Chris knew the fix. He'd proven it. For their top 20 dream clients, he'd researched each one manually. Found a recent campaign they'd run. A job posting that signalled a gap. A LinkedIn post that revealed a priority. Wrote a message that referenced it. Reply rate on those 20: 35%.

But 35% on 20 emails doesn't build a pipeline. And he couldn't spend 25-30 minutes researching each of the 500 prospects he needed to reach every week. That's 208 hours. There aren't that many hours in a week.

So the agency chose volume over quality. And got 2.2% to show for it.

The Agency

Digital marketing firm in Austin, Texas. Fourteen employees. SEO, paid media, content strategy, and analytics for mid-market e-commerce companies. Annual revenue: roughly $2.2M. Target: $3.5M. The gap: four to five new clients per year at $8K to $15K per month.

Chris's weekly output: 500 cold emails through Instantly plus 50 LinkedIn connection requests. Open rate: 42%. Reply rate: 2.2%. Five positive replies per week, leading to five first calls. Call-to-close: roughly 12%.

The pipeline maths: 500 emails produce 5 conversations produce 0.6 new clients per week. Roughly 2.5 per month. Sounds like enough. But zero buffer. One bad month and the target slips.

Cost of Chris's outreach time: roughly 40% of his week. About $52,000 per year dedicated to messages with a 2.2% reply rate.

The dream-20 approach proved the concept. Research each prospect, find something specific, write something that sounds like you actually looked at their business. 35% reply rate. But research took 25-30 minutes per prospect. At 500 per week, the maths breaks.

Why Nothing Bridged the Gap

Template variation was the first attempt. Twelve variants. A/B tested extensively. Best template: 3.1% reply rate. Worst: 1.4%. The ceiling was clear. No amount of template work would break past 4% because the fundamental problem wasn't the template. It was the absence of anything specific to the person reading it.

A VA for research was the next move. Output: 15-20 researched prospects per day. At 500 per week, they'd need five VAs at $2,500 to $4,000 per month combined. And quality varied. Some research was surface-level. Some was genuinely useful. The useful stuff came at unpredictable ratios.

LinkedIn manual engagement was Chris's favourite approach. Two hours a day, engaging with prospect content before sending connection requests. Reply rate: 18%. Volume: 10-15 per day maximum.

They tried AI writing tools. Gave the tool a prospect's website, asked for a personalised email. Result: "I noticed your company focuses on sustainable fashion." Every AI-generated cold email says this. Reply rates were actually worse than templates: 1.8%.

Quality doesn't scale. Scale isn't quality. And generic AI makes it worse because it produces emails that sound personalised but aren't.

What We Built

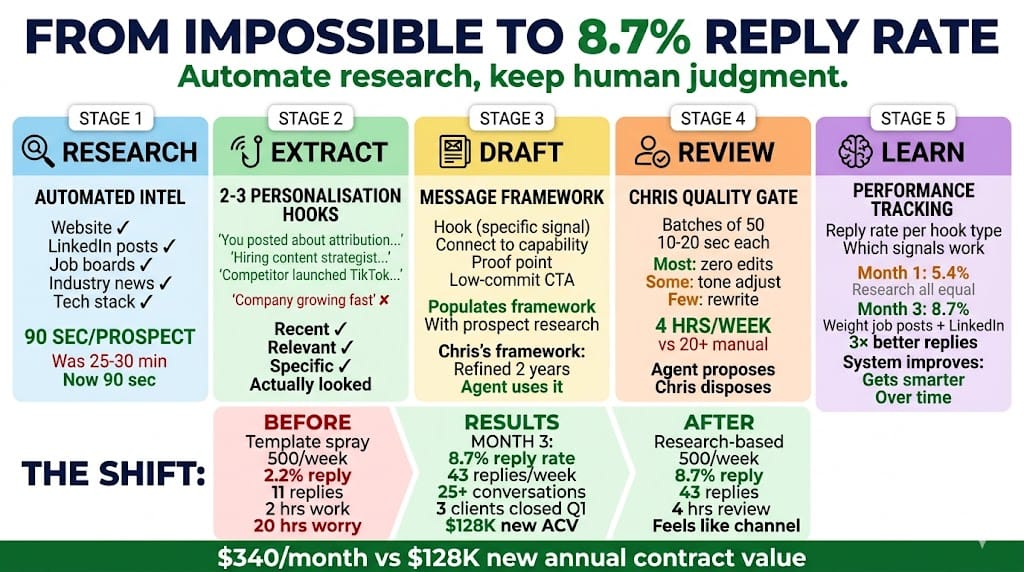

Five stages. The insight was simple: the problem wasn't the writing. It was the research. Automate the research, keep the human on the writing judgment.

Stage 1: Prospect intelligence gathering

For each prospect on the week's list, the agent researches across five sources. Company website: recent news, product launches, hiring signals, blog content. LinkedIn: the prospect's recent posts, comments, job changes, shared content. Job boards: open positions at the company. A company hiring a "performance marketing manager" is a signal they're investing in paid media, which is directly relevant to the agency's services. Industry news: recent funding rounds, partnerships, acquisitions. Tech stack signals via BuiltWith or similar: what tools they run, indicating maturity and budget.

Time per prospect: about 90 seconds automated. Chris's manual version: 25-30 minutes. Same research. Different speed.

Stage 2: Signal extraction

From the raw research, the agent identifies two to three "personalisation hooks." Specific, recent, relevant signals that demonstrate genuine awareness of the prospect's situation.

Not "I noticed your company is growing fast." That's a template line with a name pasted in. Instead: "You posted last week about struggling with attribution across six ad platforms." Or: "You're hiring a content strategist, which suggests you're scaling content but may need SEO infrastructure to support it." Or: "Your competitor just launched a TikTok shop. Your e-commerce traffic is 80% organic search. That's a strength, but diversification might be on your radar."

The difference between these and template lines isn't sophistication. It's specificity. The prospect reads it and thinks: "They actually looked at my business." Because the agent actually did.

Stage 3: Message drafting

Using the hooks, the agent drafts an email and LinkedIn message. The structure follows a framework Chris refined over two years of manual outreach: opening line referencing the specific signal. One to two sentences connecting the signal to a relevant capability. A brief proof point from a similar client result. A low-commitment CTA: reply, 15-minute call, or "want me to send over how we handled this for a similar company?"

The agent doesn't write from scratch. It populates the framework with prospect-specific research. Chris reviews and adjusts tone. The agent does the research and first draft. Chris does the judgment and final edit.

Stage 4: Quality gate

Every message goes through Chris before sending. He reviews in batches of 50, spending 10-20 seconds per message. Most need zero edits. Some need a tone adjustment. Occasionally one needs a rewrite. This is the human judgment layer. The agent proposes. Chris disposes.

Stage 5: Performance tracking and learning

Tracks reply rates by personalisation hook type. Learns which signals generate the highest responses. Month one: the agent researched evenly across all signal types. By month three: it weighted job postings and LinkedIn activity highest because those correlated with 3x higher reply rates than company news or tech stack signals. The system gets better at finding the hooks that actually work.

What We Learned Building It

The research quality varied by source. LinkedIn activity was the richest signal. Prospects who post regularly give you hooks on a platter. Job postings were second: a company hiring for a role related to your services is one of the strongest cold outreach signals that exists. Company websites were hit-or-miss. Tech stack data was useful for qualifying but rarely produced a compelling opener. By month two, the agent was spending 60% of research time on LinkedIn and job boards.

Chris's review rate was higher than expected. We'd planned for 5% of messages needing edits. Actual rate in month one: about 22%. The agent occasionally pulled signals that were technically accurate but tonally off. Referencing a company's recent layoffs as a "restructuring opportunity" doesn't land well. Chris caught these. By month three, as the learning loop improved and we added a few guardrails around sensitive topics, the edit rate dropped to about 8%.

Three prospects mentioned the personalisation specifically. "This is the first cold email I've actually read in months." One of those three became a client. Chris framed it perfectly: "The email didn't sell them. The email earned the right to a conversation. The conversation sold them."

The learning loop was the sleeper feature. We built it as a nice-to-have. It became the most important stage. The difference between month one (5.4% reply rate) and month three (8.7%) was almost entirely driven by the agent learning which hook types worked. Job postings plus LinkedIn activity produced reply rates 3x higher than company news alone. Without the loop, the agent would still be researching everything equally and leaving performance on the table.

The Numbers

Metric | Before | After (Month 3) |

|---|---|---|

Emails/week | 500 | 500 |

Reply rate | 2.2% | 8.7% |

Positive replies/week | ~5 | ~25 |

Weekly first conversations | 5 | 25+ |

Chris's research time/week | 20+ hrs | 0 (agent handles) |

Chris's review/edit time/week | N/A | ~4 hrs |

New clients closed (Q1) | ~2 (expected pace) | 3 |

Agent cost/month | N/A | $340 |

New client revenue attributed to improved outreach in Q1: roughly $128,000 in annual contract value. One additional client beyond expected pace, plus a pipeline entering Q2 with 14 active conversations instead of the typical 3-4.

$340 per month. $4,080 per year. Against $128,000 in new annual client value and 16 hours of Chris's week returned to relationship nurturing and proposal writing.

But the number Chris cares about most: 8.7%. At 2.2%, cold outreach felt pointless. Every Monday was the same grind of sending messages he knew would mostly be ignored. At 8.7%, it feels like a channel. The work has a return. The pipeline has conversations. The maths works.

The Pattern

If you're running outbound outreach and your personalisation strategy is swapping {{first_name}} and {{company_name}} into a template, your list probably isn't the problem. Your message is.

You can't scale quality research manually. One person can research 20 prospects a week at 25 minutes each. An agent can research 500 at 90 seconds each. Same sources. Same signals. Different speed.

And generic AI-generated emails make it worse, not better. Prospects can smell "I noticed your company" from across their inbox. The fix isn't better templates. It's better research, applied to a proven framework, with human judgment as the quality gate.

The agent doesn't write magic copy. It does the research that makes copy specific. The part that's impossible for humans to do at volume and trivial for an agent to do at scale. The writing judgment stays human. The 25 minutes of research per prospect doesn't.

Want to see if your outreach has a personalisation gap? The AI Bottleneck Diagnostic takes 10 minutes and shows you where volume is killing quality. No pitch.

Want to see 25+ agent architectures across different industries? Download Unstuck. It includes blueprints for cash flow, documentation, pipeline tracking, subcontractor matching, and more.

by TG

for the AdAI Ed. Team