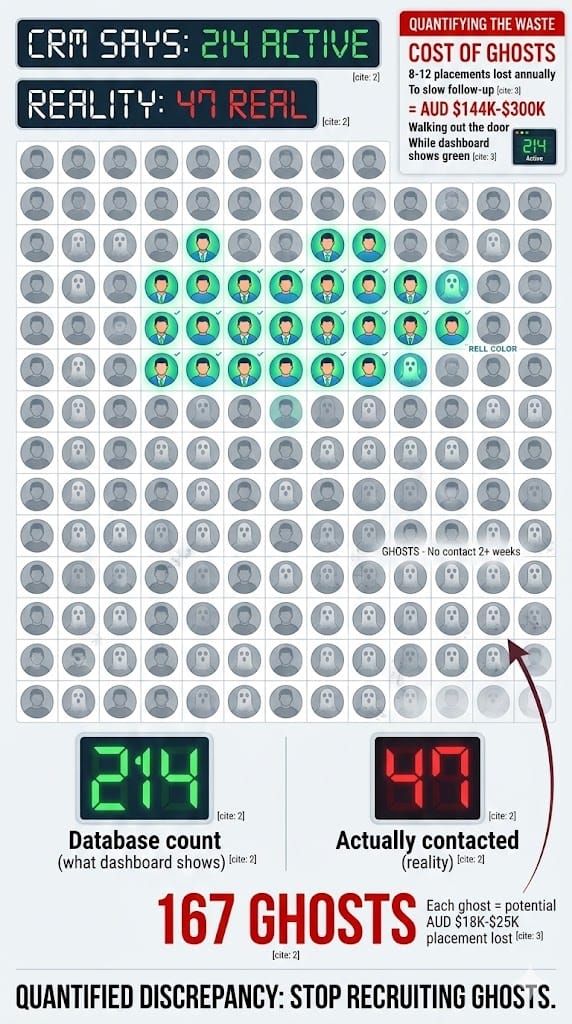

TL;DR: A boutique recruitment agency in Melbourne specialising in accounting and finance placements had 214 "active" candidates in their CRM. When the director asked consultants to list the ones they'd actually spoken to in the last seven days, the answer was 47. The other 167 were ghosts. Sitting in a database, going stale, some already placed elsewhere. We built a velocity agent that tracks days-in-stage for every candidate, flags stalls, scores by urgency, and delivers each consultant a morning briefing with their five most critical follow-ups. Ghost candidates: identified and archived. Average follow-up time on priority candidates: 4.2 days down to 11 hours. Candidates lost to competitor offers: 7 per quarter down to 2. Three additional placements in Q1 directly attributed to velocity alerts. Agent cost: AUD $240/month.

She Was Perfect

Ten years of experience. Two relevant certifications. References that glowed. The client had been waiting six weeks for a candidate like this.

The consultant who found her flagged her as a priority on Tuesday. Sent himself an internal note: "Call Sarah M - priority." Then a client meeting overran. Then an urgent contract role came in that needed a shortlist by end of day. Then it was Friday.

By Monday, she'd signed with a competitor.

He found out via LinkedIn. New role. New company. Posted with a smiley face and a paragraph about "exciting new chapters."

He checked his task list. "Call Sarah M - priority" was sitting there, five days old, sandwiched between 36 other follow-ups he hadn't gotten to either.

The agency director, Nathan, asked the question every agency owner eventually asks: "How does this keep happening?"

The answer was uncomfortable. It keeps happening because six people can't meaningfully track 200-plus candidates across 30-plus open roles without things falling through. Not because they don't care. Not because they're disorganised. Because the maths doesn't work. Six humans. Two hundred candidates. Thirty-five roles. Daily client calls, new briefs, admin, references, interview coordination. Something always slips. And the thing that slips is usually the follow-up that isn't screaming for attention right now but matters enormously by next week.

The Agency

Boutique recruitment firm in Melbourne. Specialises in accounting and finance placements, permanent and contract. Nathan runs it. Six consultants, one resourcer, one admin.

About 200 active candidates at any given time across 30 to 35 open roles. The CRM is well-populated. Pipeline stages are standard: sourced, contacted, screening, submitted to client, interview, offer, placed. Average placement fee: AUD $18,000 to $25,000.

The pipeline looked healthy. The dashboard showed 214 active candidates. Consultants were making calls, sending emails, submitting candidates. Activity metrics were fine.

Then Nathan did something nobody had done before. He asked each consultant to name the candidates they'd personally spoken to in the last seven days.

Forty-seven.

The other 167 were ghosts. Candidates sitting in a CRM status called "active" who hadn't been contacted in two weeks, three weeks, some over a month. Some had already been placed by other agencies. Some had lost interest. Some had forgotten they'd applied. The database said 214. Reality said 47.

Each lost priority candidate represents a potential AUD $18K-$25K placement fee. Nathan estimated the agency had lost 8 to 12 placements in the previous year to slow follow-up. That's AUD $144K to $300K in revenue that walked out the door while the dashboard showed green.

Why the CRM Was Lying

They'd tried to fix this before. Every attempt measured the wrong thing.

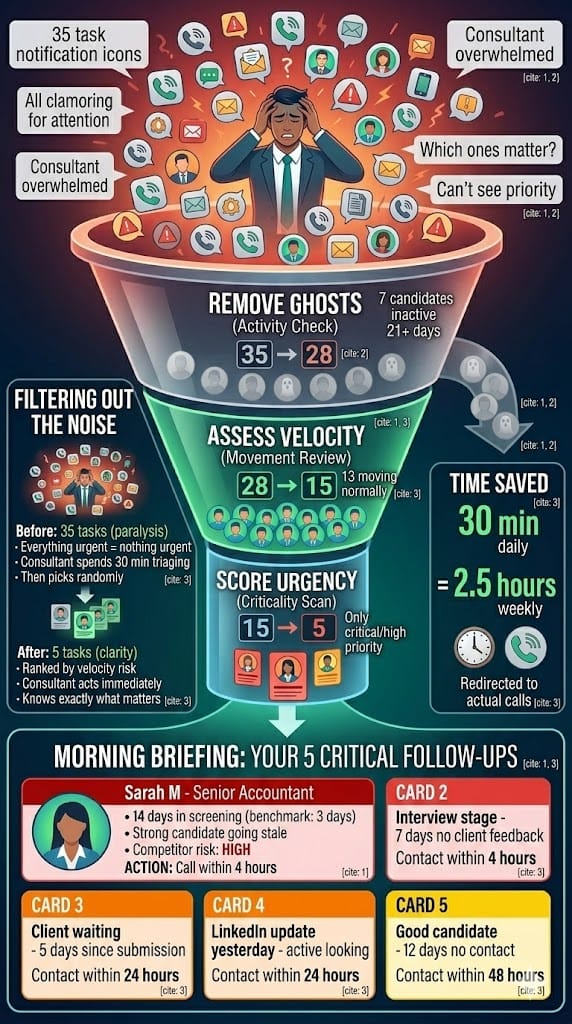

CRM reminders were the obvious first step. Every candidate gets a follow-up task. But when a consultant has 35 tasks due today and 3 are genuinely urgent, the other 32 slip. By Wednesday, last Monday's tasks are buried under this week's tasks. The reminder system doesn't create priority. It creates noise. And noise teaches people to ignore the system.

Weekly pipeline review meetings ran 90 minutes every Monday. Consultants reported on "active" candidates. But "active" meant "exists in the CRM," not "spoken to recently." A candidate flagged as active three weeks ago with no contact since was discussed as if she were still engaged. The meeting reinforced the illusion instead of exposing it.

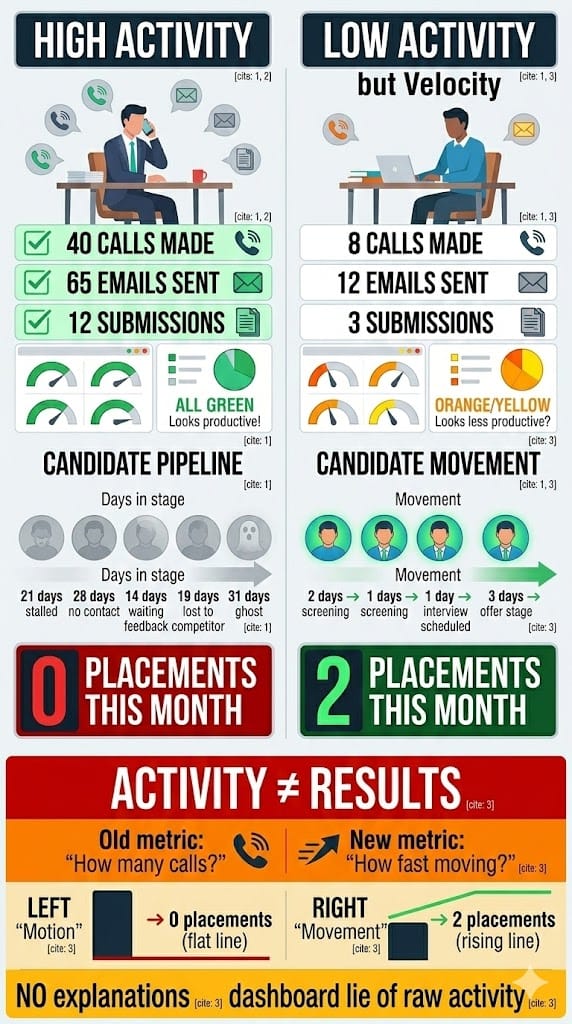

KPI dashboards tracked activities. Calls made. Emails sent. Submissions completed. A consultant who made 40 calls to dead candidates looked better on the dashboard than one who made 8 calls to candidates who were genuinely moving. The metrics rewarded motion, not movement.

Nathan hired a resourcer specifically to keep the pipeline warm. But one person can't meaningfully manage 200-plus candidates across 35 roles. She became another person doing the same overwhelmed job, just with a different title.

Every fix measured activity. Nobody measured velocity: how fast candidates actually move through stages versus how long they sit.

What We Built

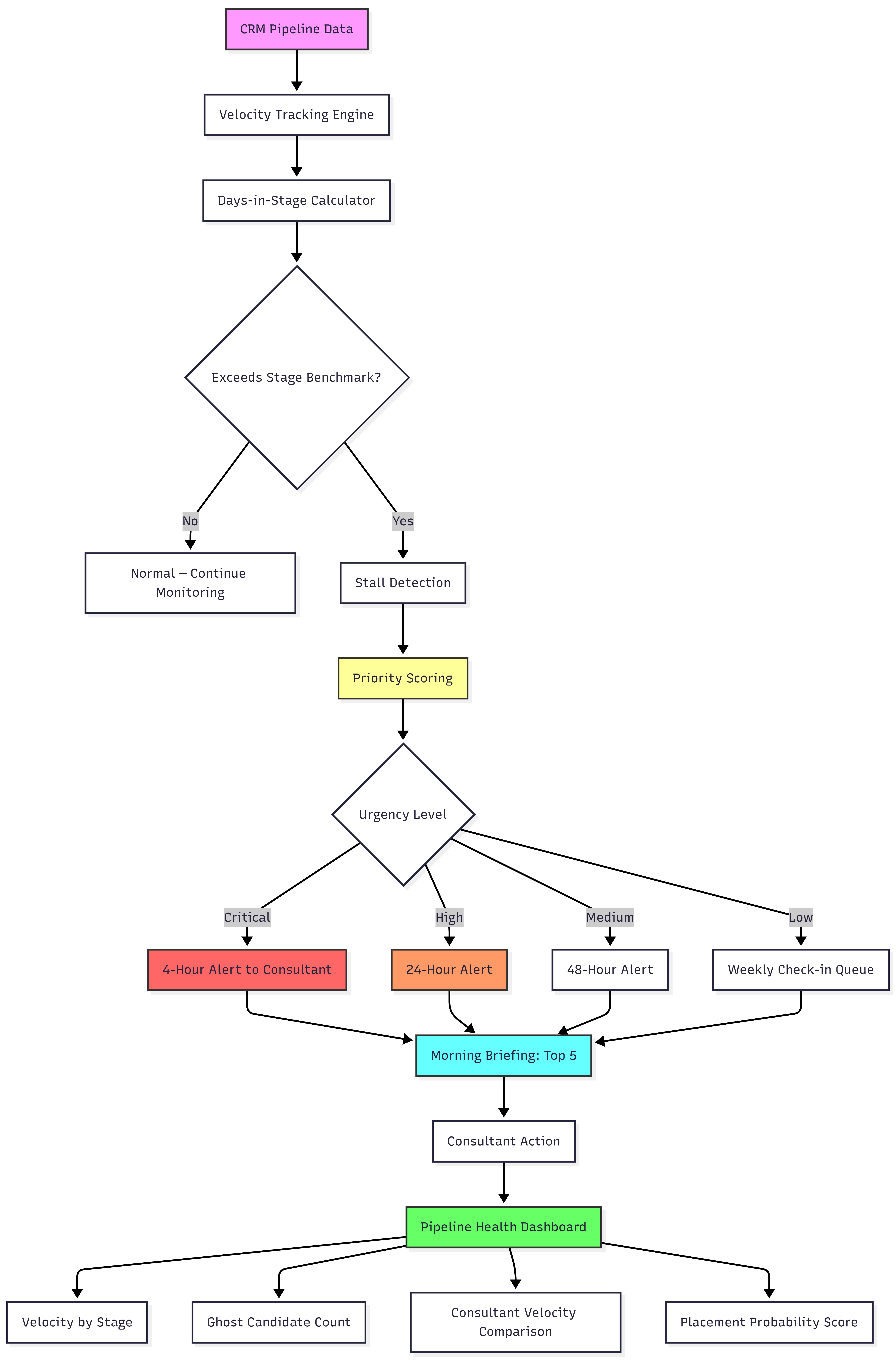

Four stages, all running against the live CRM data.

Stage 1: Velocity tracking

The agent monitors every candidate's movement through pipeline stages. It calculates days-in-stage for each candidate and compares against benchmarks by role type and seniority level.

A senior accountant candidate in "screening" for three days is normal. The same candidate in "screening" for fourteen days is stale. A contract bookkeeper in "submitted to client" for two days is fine. At seven days with no client feedback, the submission is dying.

The benchmarks came from the agency's own historical data. We pulled 18 months of placement records and calculated median stage durations for successful placements by role category. Those medians became the baseline. Anything significantly exceeding them triggers the next stage.

Stage 2: Stall detection and priority scoring

Candidates exceeding their stage benchmark get scored by urgency:

Critical means high-value candidate, client waiting, competitor risk indicators. Contact within 4 hours. This is the Sarah M scenario. A strong candidate stalling at a stage where competitor offers are likely.

High means a good candidate stalling at interview stage. Contact within 24 hours. The client hasn't given feedback. The candidate is waiting. Silence is the enemy.

Medium means a pipeline candidate with no recent activity. Contact within 48 hours. Not urgent today, but drifting toward ghost status.

Low means early-stage candidate, no immediate pressure. Weekly check-in is sufficient.

The agent also cross-references external signals where available. LinkedIn profile updates, job board activity changes. A candidate who updated their LinkedIn headline last week is actively looking and moving fast. Treat accordingly.

Stage 3: Consultant alerts and daily briefing

Each consultant gets a morning briefing at 8:15 AM: "Here are your 5 most urgent follow-ups today, ranked by velocity risk."

Not 35 tasks. Five. With context: why this candidate is urgent, how long they've been stalling, what the recommended next action is.

This was the design decision that made the biggest difference. We could've built another task list. Instead, we built a filter. The system's job isn't to show everything. It's to surface the five things that matter most right now and suppress the noise around them.

Stage 4: Pipeline health dashboard

Real-time view for Nathan:

Actual velocity by stage. Median days, not average, so outliers don't skew the picture. Ghost candidate count: anyone in the CRM with no activity beyond 21 days. Consultant comparison based on velocity, not activity. Whose candidates are actually moving through stages? And placement probability by candidate, based on velocity patterns. The data showed that fast-moving candidates converted at roughly three times the rate of stale ones. Speed predicted success better than any other variable.

What We Learned Building It

The benchmarks needed to be role-specific. Our first version used a single set of stage benchmarks across all roles. It flagged contract bookkeeper candidates as stale after the same duration as CFO searches. Those are completely different timelines. A contract role that isn't filled in two weeks is dead. A CFO search might run eight weeks with the right candidate still engaged. We built separate benchmark curves for four role categories (contract, permanent mid-level, permanent senior, executive) and the false alert rate dropped from about 30% to under 10%.

Consultant adoption took exactly three weeks. Week one: scepticism. "Another system telling me what to do." Week two: grudging use. A couple of consultants tried the morning briefing and found it saved them 30-plus minutes of task-list triage. Week three: the consultant who'd been most resistant had a critical alert surface a candidate he'd forgotten about. She was about to accept elsewhere. He called, re-engaged her, and placed her within ten days. After that, adoption wasn't a problem.

The ghost purge was emotionally difficult. Archiving 167 candidates felt like admitting failure. Nathan's instinct was to try re-engaging all of them first. We compromised: the agent sent a brief "Are you still looking?" message to every ghost candidate. Twelve responded. The rest were archived. The pipeline went from 214 to 89. It felt smaller. It was dramatically healthier.

The placement probability score became the most-used metric. We built it as an experiment. Turns out velocity through early stages is a strong predictor of eventual placement. Candidates who moved from contacted to screening in under 48 hours placed at 3.2x the rate of those who took longer than a week. Nathan started using the probability score to decide where to focus client conversations. "I've got a candidate with a 78% placement probability" carried more weight than "I've got someone who might be interested."

The Numbers

Metric | Before | After |

|---|---|---|

"Active" candidates in CRM | 214 | 89 (genuinely active) |

Follow-up time on priority candidates | 4.2 days | 11 hours |

Candidates lost to competitors (Q1) | 7 | 2 |

Placements (Q1 vs prior year Q1) | — | Up 23% |

Monday pipeline meeting | 90 min | 30 min |

Agent cost/month | N/A | AUD $240 |

Three additional placements in Q1 were directly attributed to velocity alerts. Candidates who would've gone cold under the old system but were contacted within the critical window. At AUD $18K-$25K per placement, that's AUD $54K-$75K recovered in a single quarter. Against a system cost of AUD $720 for the quarter.

But Nathan said the biggest shift was cultural. The agency stopped measuring activity and started measuring velocity. "How many calls did you make?" became "How fast are your candidates moving?" The consultants who adapted fastest placed the most. The metric changed the behaviour.

The Pattern

If you're running a recruitment agency, or any firm with a multi-stage pipeline where speed is a competitive advantage, and your CRM measures what exists but not what's moving, your pipeline is lying to you.

Your dashboard shows activity. Calls made, emails sent, candidates in various stages. What it doesn't show is velocity: how fast things move, where they stall, and which ones are quietly dying while your team chases the loudest fires.

Your consultants aren't lazy. They're overwhelmed by a system that creates noise instead of signal. Thirty-five tasks with no priority ranking is the same as zero tasks with no priority ranking. Both produce paralysis.

The agent doesn't replace the consultant's judgment or their relationships. It replaces the mental overhead of triaging a messy task list to find the five things that actually matter today. The rest can wait. The five can't.

Want to see if your pipeline has a velocity problem? The AI Bottleneck Audit takes 5 minutes and shows you where candidates, deals, or prospects are stalling. No pitch.

Want to see 25 agent architectures across different industries? Download Unstuck. It includes blueprints for intake, sourcing, conflicts, engagement letters, and more.

by CG

for the AdAI Ed. Team