TL;DR: Most cold outreach fails not because of targeting but because of personalisation, or rather, what most people call personalisation. Token substitution (swapping in a first name and company name) isn't personalisation. It's a merge field. There are three levels: token personalisation (ceiling: ~3%), context personalisation referencing something about the company (ceiling: ~6-8%), and signal personalisation referencing a specific, recent, relevant indicator of need (ceiling: ~12-20%). Almost nobody operates at Level 3 because it requires genuine research per prospect. The fix isn't better writing. It's better research, automated at the research step rather than the writing step. AI is bad at writing personalised emails from scratch. It's excellent at scanning five sources in 90 seconds to extract the signals that make an email feel researched.

Everybody Blames the List

Everyone's first instinct when reply rates are low: "Our ICP isn't refined enough." "We need better data." "The emails aren't getting through." "We should try a different sending tool."

But your open rates are 40-plus percent. The emails are getting through. The prospects are seeing them.

They're just not replying.

Because the email says nothing that proves you know anything about their business. "I noticed {{company_name}} is growing fast" is the cold email equivalent of walking up to someone at a conference and saying "So, you work in business." Everyone says it. Nobody means it. Nobody responds to it.

A marketing agency in Austin was sending 500 cold emails per week. Open rate: 42%. Reply rate: 2.2%. Eleven replies out of 500. The prospects were right: marketing directors and CMOs at mid-market e-commerce companies. Good ICP. Verified emails. Clean list.

The list wasn't the problem. The message was. Three templates, each opening with a generic observation that every competitor was also sending. The kind of line that gets skimmed, recognised as template, and deleted before the second sentence.

The problem isn't targeting. It's personalisation. Or rather, what most people call personalisation, which isn't personalisation at all. It's token substitution. And the difference between those two things is the difference between 2.2% and 8.7%.

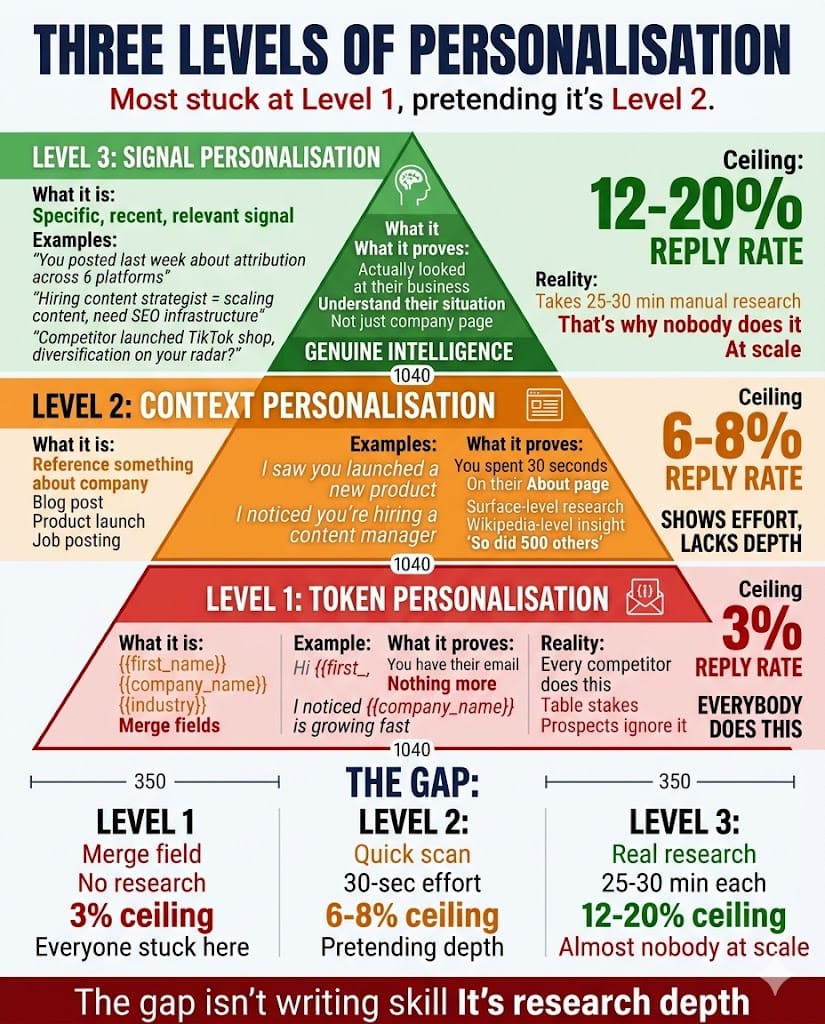

Three Levels of Personalisation

Not all personalisation is the same. Most outreach is stuck at Level 1 pretending to be Level 2. Almost nobody operates at Level 3 because, until recently, it was impossible at volume.

Level 1: Token personalisation. {{first_name}}, {{company_name}}, {{industry}}. The merge fields. This is what most outreach tools mean by "personalised." It's table stakes. Every competitor does it. Prospects don't notice it when it's done right and actively distrust you when it's done wrong ("Hi {{first_name}}, I noticed {{company_name}} is...").

Token personalisation tells the prospect you have their contact details. It doesn't tell them you understand their business. Reply rate ceiling: roughly 3%.

Level 2: Context personalisation. You reference something about the company. A recent blog post, a product launch, a job posting. "I saw you're hiring a content manager. Sounds like content is becoming a priority." This shows you looked. It's better than Level 1 because it proves effort.

The problem: most context personalisation is surface-level. "I noticed you recently launched a new product." So did 500 other people. It's Wikipedia-level research presented as insight. The prospect reads it and thinks: "You spent 30 seconds on my About page." Which is exactly what happened.

Reply rate ceiling: roughly 6-8%.

Level 3: Signal personalisation. You reference a specific, recent, relevant signal that connects to a genuine need. "You posted last week about struggling with attribution across 6 ad platforms. We helped a similar company consolidate to 2 platforms and cut CAC by 22%."

This isn't research. It's intelligence. It proves you understand their situation, not just their company page. The prospect reads it and thinks: "They actually looked at my business." Because someone, or something, actually did.

Reply rate ceiling: roughly 12-20%, depending on relevance and offer strength.

The gap between levels isn't about writing skill. It's about research depth. Level 1 requires a contact database. Level 2 requires a quick website scan. Level 3 requires scanning LinkedIn activity, job boards, industry news, and company signals to find the specific detail that makes the prospect feel understood.

That research takes 25-30 minutes per prospect when done manually. Which is why almost nobody does it at scale.

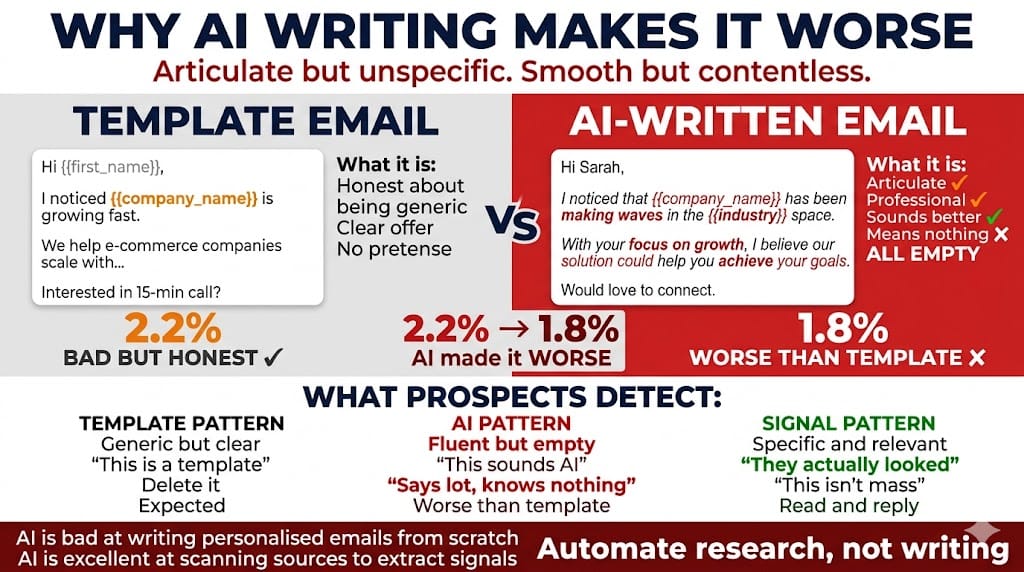

Why AI Writing Makes It Worse

The instinct when reply rates are low: "Let's use AI to write better emails."

This backfires for a specific reason. AI writing tools are good at generating fluent, professional-sounding copy. But they can't generate prospect-specific insight from a name and a URL.

What they produce: "I noticed that {{company_name}} has been making waves in the {{industry}} space. With your focus on growth, I believe our solution could help you achieve your goals."

Confident. Professional. Completely empty.

Prospects detect AI-generated outreach because it has a specific flavour: articulate but unspecific. It says a lot without knowing anything. It's the email equivalent of a politician's answer. Smooth, competent, and contentless.

The result: AI-written outreach often performs worse than good templates. Because templates at least have a clear offer. AI-generated emails have a clear tone and no substance. The Austin agency tested this. Reply rate on AI-written emails: 1.8%. Worse than their 2.2% template baseline. The AI made the emails sound better while making them perform worse.

Here's the distinction that changes everything: AI is bad at writing personalised cold emails from scratch. AI is excellent at scanning a company's website, LinkedIn feed, job postings, and news to extract the 2-3 signals that make a cold email feel researched.

The writing step isn't the bottleneck. The research step is. Automate the research. Keep the human on the writing judgment. That's the order that works.

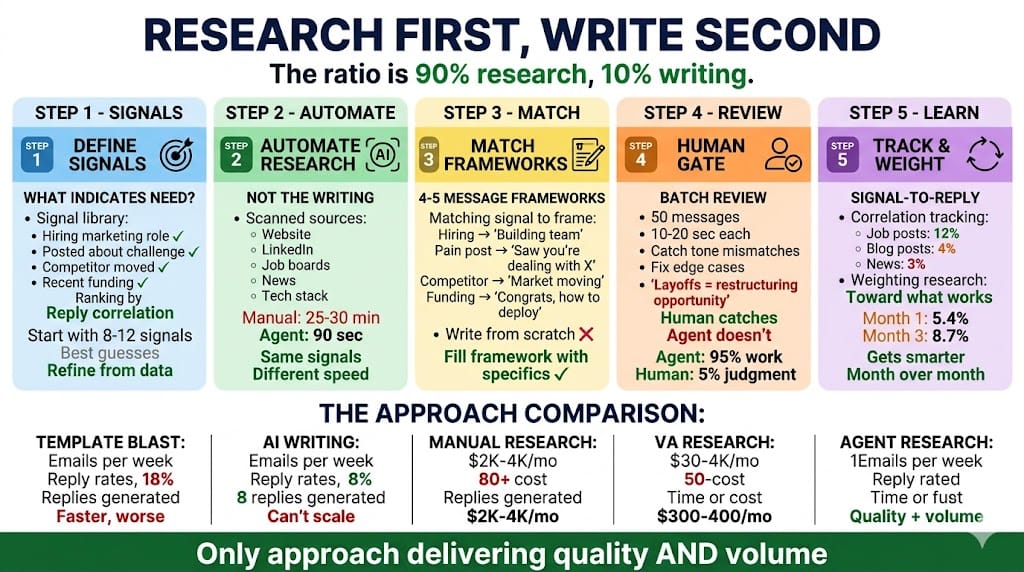

The Research-First Framework

How to build signal-personalised outreach at scale. Five steps.

Step 1: Define your signals. What prospect behaviours or situations indicate they need what you sell? For a marketing agency: hiring a marketing role (building capacity), posting about a specific challenge (advertising the pain), a competitor making a move (creating urgency), recent funding (budget available).

Build a signal library. Eight to twelve signals, ranked by how strongly they correlate with positive replies. You won't know the ranking at first. You'll learn it from data. Start with your best guesses and refine.

Step 2: Automate the research, not the writing. For each prospect, scan three to five sources for signals: website, LinkedIn, job boards, news, tech stack. This is where AI agents actually add value: not in writing, but in reading, extracting, and summarising. Time per prospect manually: 25-30 minutes. Time per prospect with an agent: 60-90 seconds. Same sources. Same signals. Different speed.

Step 3: Match signals to message frameworks. Don't write from scratch each time. Have four or five message frameworks that correspond to signal types. Hiring signal gets the "building your team" framework. A pain signal from a LinkedIn post gets the "I saw you're dealing with X" framework. A competitor signal gets the "your market is moving" framework. Funding gets "congratulations, here's how to deploy."

The agent selects the framework based on the signal. The prospect-specific details fill in the blanks. Not token blanks. Genuinely specific context that proves intelligence.

Step 4: Human quality gate. Every message gets reviewed before sending. Batch review: 50 messages, 10-20 seconds each. The human catches tone mismatches, wrong signals, and edge cases. Referencing a company's layoffs as a "restructuring opportunity" doesn't land well. A human catches that. An agent doesn't.

The agent does 95% of the work. The human does 5% of the judgment. That 5% is the difference between "impressive personalisation" and "tone-deaf automation."

Step 5: Track signal-to-reply correlation. Not all signals are equal. Job posting signals might drive 12% reply rates. Blog post signals might drive 4%. Over time, weight the research toward the signals that actually work. This is the feedback loop that improves performance month over month. The Austin agency saw reply rates climb from 5.4% in month one to 8.7% in month three almost entirely from this learning loop.

What the Numbers Actually Look Like

Here's the comparison across approaches, using real data from the blueprint series and industry benchmarks:

Template blast: 500 per week, 2-3% reply rate, 5-7 positive replies, 5 hours per week in time cost.

AI-written emails: 500 per week, 1.5-2.5% reply rate, 4-6 positive replies, 3 hours per week. Faster to produce, worse results.

Manual research: 20-30 per week, 25-35% reply rate, 5-10 positive replies, 15-plus hours per week. Best conversion but impossible volume.

VA research: 100-150 per week, 8-12% reply rate, 8-18 positive replies, $2K-$4K per month. Better quality, still limited volume, management overhead.

Agent research with human review: 500 per week, 7-12% reply rate, 35-60 positive replies, 4-6 hours per week plus $300-$400 per month.

The agent approach isn't the highest reply rate. Manual research to 20 dream clients will always convert better. But it's the only approach that delivers quality and volume simultaneously.

The Austin agency: 500 emails per week at 8.7% by month three. Twenty-five-plus conversations per week. Three clients closed in Q1. Pipeline entering Q2 with 14 active conversations instead of the typical 3-4. The compound effect is what matters. An 8.7% reply rate at 500 per week creates a pipeline that a 2.2% rate at the same volume never could.

The Ten-Email Test

Check your reply rate. If it's under 4%, the problem isn't your list.

Pull ten of your most recent outreach emails. Read them as if you're the prospect receiving them on a Tuesday morning alongside 40 other messages. Ask one question: "Does this email prove they know anything specific about my business?"

Not about my industry. Not about my job title. About my business. My situation. My recent decisions. My current challenges.

If the answer is no, you have a personalisation gap. Not a template problem. Not a targeting problem. Not a deliverability problem. A research problem.

And research problems don't get solved by better writing tools. They get solved by better research systems. The writing is the last 10% of a cold email. The research is the first 90%. Most businesses have the ratio inverted, spending all their time on copy and none on intelligence.

Flip it. Research first. Write second. Let the specificity do the selling.

Want to see if your outreach has a personalisation gap? The AI Diagnostic takes 10 minutes and shows you where volume is killing quality. No pitch.

Want to see 37 real stories of SMBs that fixed the gap? Download Unstuck. Every one found the same thing: the list was fine. The message wasn't.

by SP, CEO - Connect on LinkedIn

for the AdAI Ed. Team